Agentic Data Engineering vs Traditional Data Engineering

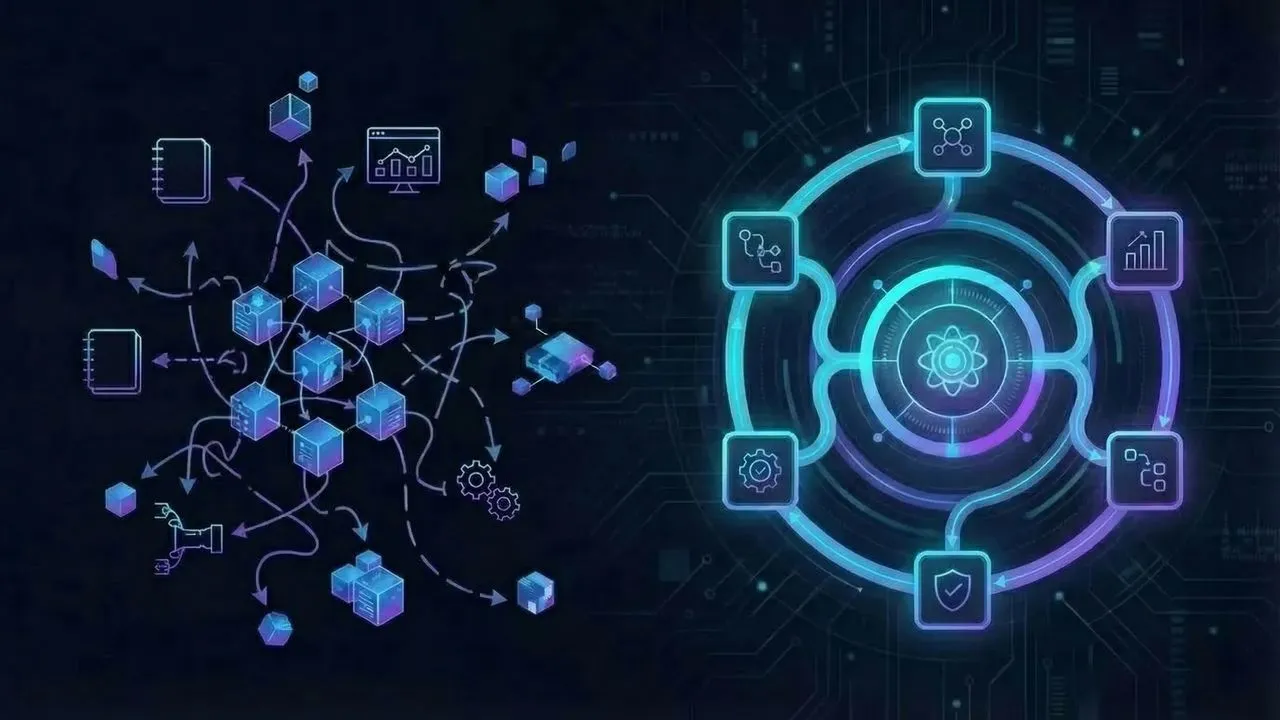

Traditional data engineering is built around tickets, handoffs, scripts, dashboards, and human coordination. Agentic data engineering shifts that model toward software agents that can understand context, plan tasks, use tools, and execute parts of the workflow with human oversight.

That does not mean replacing data engineers with a black box. It means moving from manual, fragmented work toward structured context, workflow-aware execution, and reliable automation.

For teams evaluating AI in the data stack, that distinction matters. A data engineering agent is not just a better autocomplete layer. In a production setting, it needs access to metadata, semantics, lineage, rules, and workflows if it is going to do useful work without creating new operational risk.

What changes in practice

Here’s the short version of what changes when a team moves from traditional data engineering toward an agentic model:

- Engineers spend less time rewriting repetitive SQL and more time defining reusable context, rules, and workflow patterns.

- Work moves from ticket-by-ticket execution toward intent-to-workflow orchestration.

- Agents assist with discovery, planning, validation, troubleshooting, and execution—not just code generation.

- Metadata, semantic definitions, and lineage become operational inputs, not background documentation.

- Human review shifts upstream into policy, guardrails, and approval points instead of only manual implementation.

- Reliability depends less on one-off prompts and more on whether the system is grounded in structured context.

What is agentic data engineering?

Agentic data engineering is an operating model where AI agents participate in data workflows by reasoning over structured context and taking bounded actions across tools and systems.

The important phrase there is structured context. In practice, that means the agent is not working from a prompt alone. It is working from a combination of schema knowledge, semantic definitions, lineage, metrics, operational rules, historical decisions, and workflow constraints.

That is the difference between a demo and a production approach.

Datus frames this clearly in its docs: data engineers move from writing repetitive SQL toward building reusable, AI-ready context, where corrections, domain rules, and data knowledge become long-term memory for more accurate, domain-aware work. That is a useful way to understand the category. The system becomes more capable because context becomes reusable.

What traditional data engineering still does well

Traditional data engineering exists for a reason. It is still the default operating model in most organizations because it is understandable, controllable, and proven.

In a traditional model, teams usually work like this:

- A business request or operational issue comes in.

- A data engineer investigates schemas, jobs, dashboards, and code.

- They write or modify SQL, pipeline logic, tests, or transformations.

- They validate the result manually or with existing checks.

- They deploy the change and monitor what happens next.

This approach is often slower than teams want, but it has strengths:

- Responsibility is clear.

- Review and governance are explicit.

- Domain knowledge stays close to experienced engineers.

- Existing stack components are already built around this model.

The weakness is not that the model is wrong. The weakness is that too much execution depends on manual re-interpretation of the same context over and over again.

Why traditional workflows start to break under scale

As teams grow, the cost of traditional data engineering shows up in a few predictable ways:

1. Repeated context reconstruction

Engineers keep re-learning the same business logic, metric definitions, lineage paths, and platform rules across tickets.

2. Fragmented system knowledge

Critical knowledge lives across docs, dbt models, dashboards, Slack threads, catalogs, and people’s heads.

3. Slow operational loops

Simple tasks still require multiple handoffs: request, clarification, implementation, validation, revision, deployment.

4. Tool sprawl without coordination

Warehouses, BI, semantic layers, orchestration tools, catalogs, and observability systems all contain part of the truth, but not a shared execution layer.

5. Limited leverage from AI copilots

Copilots help with drafting code or queries, but they usually do not understand enough context to safely carry work forward across the full workflow.

That’s the gap agentic data engineering is trying to close.

Agentic data engineering vs traditional data engineering

The cleanest way to compare the two models is to look at how work is organized.

| Dimension | Traditional data engineering | Agentic data engineering |

|---|---|---|

| Core unit of work | Tickets, scripts, manual tasks | Goals, workflows, and bounded agent actions |

| Primary execution model | Human-driven implementation | Human-directed, agent-assisted execution |

| Context source | Docs, tribal knowledge, scattered tools | Structured context across metadata, semantics, lineage, and rules |

| Automation style | Static pipelines and task scripts | Adaptive workflow automation with guardrails |

| Role of AI | Optional copilot for drafting | Active participant in discovery, planning, validation, and execution |

| Reliability model | Engineer experience + testing | Context grounding + guardrails + testing + human approval |

| Speed bottleneck | Manual interpretation and handoffs | Context quality, system integration, and control design |

| Knowledge reuse | Often implicit and person-dependent | Explicit and reusable across agents and teams |

| Failure mode | Slow delivery and hidden tribal knowledge | Fast mistakes if context, permissions, or controls are weak |

| Best fit | Stable teams, lower workflow volume, high manual ownership | Complex environments that need more leverage without losing control |

This is why “agentic” is not just a synonym for “automated.” The change is architectural. Traditional systems automate predefined steps. Agentic systems can help determine which steps should happen next—if they have enough context and the right boundaries.

The difference between a copilot and a data engineering agent

This is where many teams get confused.

A copilot usually helps a person complete a task inside one interface. It can suggest SQL, explain an error, or summarize documentation. Useful, yes. But the human still carries most of the workflow.

A data engineering agent is more operational. It can support or execute parts of a multi-step workflow such as:

- discovering the right assets

- interpreting semantic definitions

- tracing lineage to understand upstream impact

- proposing a plan

- generating implementation steps

- validating results against rules or expectations

- triggering the next bounded action with approval where needed

That is a different level of responsibility.

It also explains why shallow prompting is not enough. The more an agent moves from suggestion to execution, the more it needs grounded access to the real data environment.

What autonomous data engineering actually means

The term autonomous data engineering is easy to oversell. In practice, it should mean something narrower and more useful:

Autonomous data engineering is the selective automation of data engineering tasks through agents that can reason over context, use tools, and operate within explicit controls.

That means good implementations usually look like this:

- autonomous for repetitive or well-bounded steps

- supervised for changes with downstream risk

- reviewable through logs, checks, and approval points

- grounded in metadata, semantics, lineage, and governance

What it should not mean:

- one prompt builds and runs your full data platform

- agents make uncontrolled schema or metric changes in production

- teams no longer need data engineering judgment

The strongest agentic systems reduce manual toil while making human expertise more scalable.

Where agentic data engineering creates the most value

The model tends to work best in workflows where context exists but access to that context is scattered or slow.

Repetitive implementation work

Agents can accelerate common patterns in SQL generation, transformation scaffolding, test creation, and issue triage when they are grounded in the right context.

Asset discovery and explanation

A large share of engineering time goes to figuring out what already exists. Agents become more useful when they can search catalogs, semantic models, lineage, and operational history together.

Workflow orchestration

Instead of stopping at an answer, an agent can help move work forward: identify dependencies, prepare changes, validate outputs, and route the next step.

Faster incident response

When pipelines break or data quality shifts, agents can help gather context quickly: what changed, what depends on it, what likely broke, and what should be checked next.

Consistency across teams

If domain definitions, metrics, and engineering patterns are encoded as reusable context, teams become less dependent on a few institutional memory holders.

Where traditional data engineering still wins

Agentic data engineering is not automatically better in every environment.

Traditional workflows still make sense when:

- the environment is small and well understood

- the cost of mistakes is high and change volume is low

- context is not well modeled yet

- governance and approval systems are immature

- teams need deterministic pipelines more than adaptive workflow support

In other words, agentic systems do not remove the need for engineering maturity. They often increase the need for clean context, clear controls, and operational discipline.

What has to be true for agentic data engineering to work

If a team wants the benefits without the chaos, a few conditions matter more than model quality alone.

1. Structured context has to exist

Agents need more than schemas. They need business meaning, lineage, metrics, rules, and known workflow patterns.

2. Tool access has to be intentional

An agent that cannot act is limited. An agent that can act without boundaries is dangerous. Real systems need bounded permissions and explicit action design.

3. Human-in-the-loop design has to be built in

Approval steps, review surfaces, escalation paths, and observable logs are part of the architecture, not an afterthought.

4. Reliability has to be evaluated operationally

The real question is not “Did the model answer fluently?” It is “Did the workflow produce a correct, governed, reviewable outcome?”

5. The system has to work with the existing stack

Most data teams are not replacing warehouses, lakehouses, dbt, BI, catalogs, or orchestration systems. Agentic data engineering has to sit on top of that reality and make it more usable.

That last point is one reason the category is becoming more credible. The strongest platforms are not asking teams to abandon their stack. They are trying to turn fragmented stack context into an execution layer.

A practical operating model shift

The real transition is not from “people” to “AI.” It is from manual context reconstruction to reusable context-driven execution.

That changes the role of the data engineer in a useful way:

In a traditional model, engineers spend more time on:

- hunting for context

- reinterpreting business rules

- manually stitching tools together

- repeating common implementation patterns

- serving as the only execution bridge across systems

In an agentic model, engineers spend more time on:

- defining semantic logic and operational rules

- designing guardrails and approval flows

- shaping reusable workflows

- improving metadata quality and context coverage

- supervising and refining how agents work across the stack

That is a healthier direction for most mature teams. It makes engineering leverage more durable instead of simply demanding more individual heroics.

How Datus fits into this shift

Datus is best understood as part of this broader move toward agentic data engineering.

Based on its documentation and positioning, Datus emphasizes a few principles that align with the category:

- reusable AI-ready context instead of one-off prompting

- domain-aware work grounded in prior corrections and rules

- specialized subagents rather than a single generic assistant

- workflow-aware support for real data engineering tasks

- a system that works with the existing data stack rather than replacing it

That matters because agentic data engineering only becomes useful when context can be carried into execution. The problem is not just generating an answer. The problem is turning intent into reliable work.

Internal next reads

If you’re mapping this space in more detail, the next useful reads are:

- What Is a Data Engineering Agent? for the core definition layer

- Data Engineering Agent Architecture: From Prototype to Production with Datus for implementation patterns

- 7 High-Impact Data Engineering Agent Use Cases (Powered by Datus) for workflow-level examples

- The Layered Subagent Architecture for Data Engineering Agents for team and system design

Final takeaway

Traditional data engineering is built for correctness through manual control. Agentic data engineering is built for correctness and leverage through structured context, tool-aware execution, and human oversight.

That is why this shift is more than an AI feature trend. It is a change in how data work is organized.

The teams that benefit most will not be the ones chasing maximum autonomy. They will be the ones that build the best context, the best guardrails, and the clearest path from intent to execution.

FAQ

Is agentic data engineering the same as AI data pipeline automation?

Not exactly. AI data pipeline automation is a broader implementation topic focused on automating pipeline work. Agentic data engineering is a wider operating model that includes discovery, planning, validation, orchestration, and governed execution across data workflows.

What is a data engineering agent?

A data engineering agent is an AI system designed to support or execute parts of data engineering work using structured context, tool access, and workflow logic. It goes beyond chat or code suggestion by participating in multi-step tasks.

Does agentic data engineering replace data engineers?

No. The practical goal is to reduce repetitive manual work and make engineering knowledge more reusable. Human judgment is still required for system design, governance, review, and high-risk changes.

What makes autonomous data engineering reliable?

Reliability comes from context quality, semantic clarity, lineage awareness, bounded permissions, validation checks, and human approval where needed. Better prompting alone is not enough.

When should a team stay with a traditional data engineering model?

If the environment is small, stable, heavily regulated, or not yet well-modeled, a traditional approach may remain the better fit. Agentic systems work best when teams already have meaningful context, governance, and workflow maturity to build on.

Related Reading

- What Is a Data Engineering Agent? A Practical Guide with Datus

- Data Engineering Agent Architecture: From Prototype to Production with Datus

- 7 High-Impact Data Engineering Agent Use Cases (Powered by Datus)

- The Layered Subagent Architecture for Data Engineering Agents

Start with Datus

- GitHub: https://github.com/Datus-ai/Datus-agent

- Docs: Datus Docs

- Main site: https://datus.ai