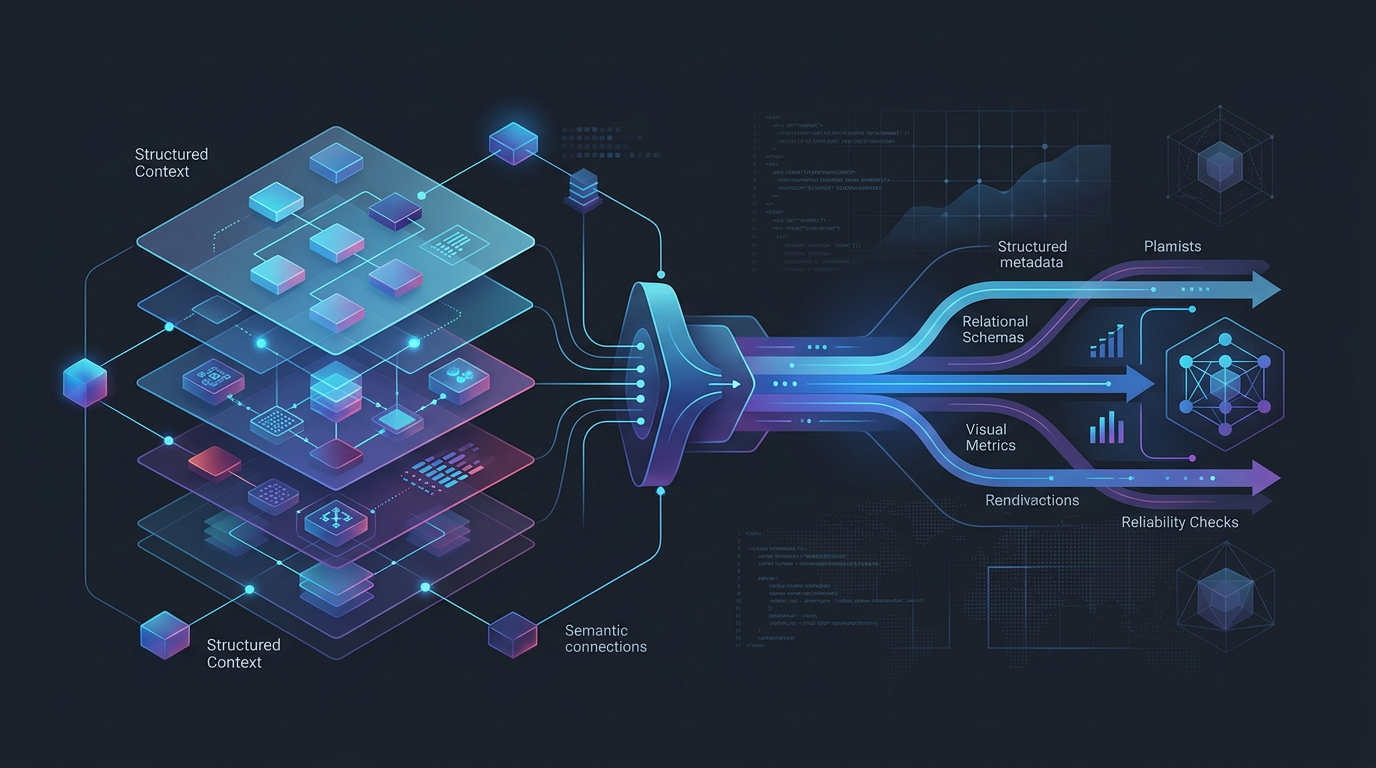

How Structured Context Improves AI Agent Output

AI agent output usually breaks for a simple reason: the model has language, but not enough operating context.

That gap shows up everywhere. An agent writes SQL against the wrong table. It answers a metrics question with a plausible but inconsistent definition. It recommends a workflow step that ignores lineage, freshness, or governance constraints. The output looks fluent, but it is not grounded.

Structured context changes that.

In data workflows, structured context means the agent is not working from prompts alone. It has access to explicit definitions, semantic models, metric logic, table relationships, lineage, ownership, workflow state, and system constraints. That does not make the agent perfect. It does make the output far more reliable, easier to evaluate, and safer to use in production.

What structured context means for AI agents

Structured context is the organized, machine-usable representation of how a data system actually works.

For an AI agent, that can include:

- semantic models for entities, dimensions, and joins

- governed metric definitions

- schema and catalog metadata

- lineage between upstream and downstream assets

- freshness, quality, and ownership signals

- workflow steps, tool permissions, and execution history

- naming conventions and business definitions

This matters because most agent failures are not purely language failures. They are context failures.

The model may understand the words "revenue by region." What it often does not know is:

- which revenue metric is the approved one

- whether refunds are included

- which regional hierarchy the business uses

- which model is production-ready

- what time grain is valid for comparison

Without that structure, the agent fills gaps with probability. With structure, it can reason against defined constraints.

Before vs after: what changes in output quality

The effect of structured context is easiest to see side by side.

Before structured context

An agent gets a prompt: "Show weekly net revenue growth for enterprise accounts in APAC."

It may:

- pick the wrong revenue field

- confuse gross revenue with net revenue

- join account and geography tables incorrectly

- use a stale or deprecated model

- ignore the business rule for enterprise segmentation

- produce a convincing chart with the wrong denominator

The output can still look polished. That is the dangerous part.

After structured context

The same agent now has access to:

- a semantic model that defines account, geography, and revenue entities

- a governed

net_revenuemetric definition - the approved enterprise account filter

- lineage showing which model is downstream of the finance reconciliation process

- freshness checks and ownership metadata

Now the agent can:

- select the correct metric instead of inferring one

- use approved joins and dimensions

- reject deprecated assets

- explain which definition it used

- surface confidence and assumptions

- produce output that is easier for teams to verify and reuse

The difference is not cosmetic. It changes whether the output is operationally useful.

Why prompts alone are not enough

Good prompts help. They do not replace system context.

A prompt can tell the model to "be careful" or "use the right metric." That is instruction, not grounding. If the model does not have access to the actual metric logic or semantic relationships, it still has to guess.

This is why prompt tuning often improves surface behavior without fixing deeper reliability problems. You may get cleaner wording or slightly better SQL style, but not consistent output across teams, dashboards, and workflows.

Structured context improves agent output in four concrete ways:

- It reduces ambiguity. The agent has defined entities, metrics, and relationships.

- It improves consistency. Different prompts can still resolve to the same approved logic.

- It makes reasoning inspectable. Teams can see which model, metric, or dependency the agent used.

- It supports safer execution. The agent can act within workflow and governance constraints, not outside them.

A simple example: from vague request to reliable answer

Consider the request: "Why did conversion drop last month?"

A generic agent may return a broad narrative based on a few tables or recent dashboard values. It might mention traffic quality, funnel friction, or seasonality. The answer could be readable and still be wrong.

An agent working with structured context behaves differently.

It can trace:

- the semantic definition of conversion

- which funnel stages are included

- whether the metric is session-based, user-based, or account-based

- which dimensions are valid for breakdown

- whether an upstream model changed during the time period

- whether data quality incidents affected the metric

Now the answer becomes more disciplined:

Conversion declined 6.8% month over month using the governed

conversion_ratemetric. The largest change came from the trial-to-activated stage for self-serve accounts in EMEA. Lineage shows a pricing event schema update landed on March 2, which also triggered a freshness warning in the attribution model. Before concluding this is behavioral, validate the upstream event mapping fortrial_startedandactivated.

That is a better output because it is anchored in definitions, dependencies, and workflow signals. It is not just a well-written guess.

Structured context is especially important for data agents

Data agents operate in systems where small semantic mistakes create large downstream problems.

If an agent misinterprets a sales metric, the result is not just a bad sentence. It can lead to:

- incorrect dashboard updates

- broken stakeholder trust

- flawed prioritization decisions

- noisy incident triage

- invalid automated actions in downstream workflows

This is why structured context matters more in production data work than in casual chat use cases.

Reliable agent output in data environments depends on more than model quality. It depends on whether the system can connect natural language requests to real operational context.

That is where semantic models and metrics definitions play a central role. They give the agent a stable layer of meaning. Instead of treating every prompt as an isolated task, the agent can reason against a shared representation of business logic.

What kinds of structured context improve output the most

Not all context has the same value. In practice, these layers tend to have the biggest effect on output quality.

1. Semantic models

Semantic models help agents understand entities, dimensions, relationships, and valid paths through the data.

This reduces:

- invalid joins

- entity confusion

- grain mismatches

- inconsistent breakdowns

2. Governed metrics

Metrics give agents a reusable definition instead of forcing them to reconstruct business logic from raw fields.

This reduces:

- metric drift across prompts

- competing definitions in different teams

- silent errors in KPI analysis

3. Lineage and dependency context

Lineage tells the agent what depends on what.

This improves:

- root cause analysis

- impact analysis

- confidence scoring

- safe action planning

4. Quality and freshness signals

An agent should know when the underlying data may be unreliable.

This improves:

- answer caution

- incident awareness

- recommendation quality

- escalation behavior

5. Workflow and tool context

The best output is often not a single answer. It is the right next action.

Workflow context helps agents understand:

- what tools are available

- what step comes next

- which actions require approval

- how to move from analysis to execution with guardrails

A practical checklist for improving AI agent output

If you want better output from an AI agent, do not start by rewriting the prompt ten times. Start by checking the context layer.

Use this checklist:

- [ ] Are core business metrics explicitly defined and reusable?

- [ ] Does the agent have access to semantic models, not just raw schemas?

- [ ] Can it distinguish production assets from deprecated or draft ones?

- [ ] Does it understand lineage and upstream dependencies?

- [ ] Can it see freshness, quality, and ownership metadata?

- [ ] Are workflow constraints and tool permissions encoded?

- [ ] Can the agent explain which definitions and assets it used?

- [ ] Can humans review, override, or approve important actions?

If most of these are missing, output quality will stay uneven no matter how polished the prompting looks.

Where Datus fits

Datus is built around a simple idea: reliable agent output requires structured context that can support reasoning and execution.

That is why the focus is not just on generating answers. It is on connecting semantic models, metrics, metadata, lineage, and workflow context so agents can operate with more discipline inside real data systems.

For teams building agentic analytics or data engineering workflows, that matters. The goal is not to make agents sound smart. The goal is to make their output usable, traceable, and aligned with how the business actually defines data.

Final takeaway

Structured context improves AI agent output because it replaces guesswork with defined meaning.

When agents can access semantic models, governed metrics, lineage, and workflow constraints, they stop behaving like isolated text generators. They become much better at producing answers and actions that hold up in production.

That is the shift teams should care about.

Not more fluent output.

More grounded output.

Next steps

- Why AI Agents Need Semantic Context to Work Reliably

- Why Reliable Data Agents Need More Than Good Prompts

- Explore Datus Docs for semantic models, metrics, and workflow context

FAQ

What is structured context for AI agents?

Structured context is the explicit system knowledge an agent can use during reasoning and execution, including semantic models, metric definitions, metadata, lineage, quality signals, and workflow constraints.

How does structured context improve AI agent output?

It improves output by reducing ambiguity, grounding the agent in approved definitions, making reasoning more consistent, and helping the system avoid invalid actions or misleading answers.

Is structured context the same as prompt engineering?

No. Prompt engineering changes how you ask. Structured context changes what the agent actually knows and can reference while working.

Why does this matter more in data workflows?

Because small semantic errors in metrics, joins, lineage, or model selection can create large downstream business errors. In data systems, plausible output is not enough.

Do semantic models and metrics layers help AI agents?

Yes. They give agents a more stable representation of business meaning, which improves consistency across prompts, users, and workflows.

ean, modern editorial illustration for a B2B data infrastructure blog: an AI agent operating between two states, left side showing chaotic unstructured prompts, tangled tables, broken charts, and ambiguous metrics; right side showing structured context with semantic models, governed metrics, lineage graphs, and workflow guardrails leading to reliable outputs. Visual style should be minimal, technical, credible, and polished. Use deep blues, white, and subtle teal accents. No cartoonish robots, no hype aesthetics, no sci-fi excess. Emphasize clarity, system structure, and production-grade data workflows.