Why AI Agents Need Semantic Context to Work Reliably

AI agents do not fail in production because they are bad at language. They fail because language alone is too weak a control surface for complex systems. If an agent is expected to work across warehouses, dbt projects, semantic layers, BI assets, metrics definitions, and operational workflows, it needs more than prompts and tool access. It needs semantic context.

Semantic context is the structured meaning around data and actions: what a metric actually means, how tables relate, which dimensions are valid, what a workflow is allowed to do, where lineage flows, and which definitions the business has already agreed on. It is not just “more context.” It is context with stable meaning. That is what makes an AI agent reliable instead of merely fluent.

For data teams, this distinction matters. An agent can generate a plausible SQL query from a schema browser and still return the wrong answer. It can find a table called orders and still misuse gross revenue, ignore refund logic, or group by a field that does not match the reporting grain. Reliability starts when the agent understands not only the words in the request, but the semantics of the system it is operating in.

Why prompts are not enough

Good prompts help agents express intent. They do not create shared meaning.

A prompt can say:

- use the right business definitions

- prefer trusted sources

- avoid duplicate metrics

- follow governance rules

But unless those definitions and rules exist in a structured form the agent can inspect, the prompt is just a reminder. It is not a mechanism.

That is the core reliability gap in many AI agent implementations. Teams assume the model will infer the right business meaning from surrounding text, naming conventions, or a few examples. Sometimes it does. Often it does not. And when it fails, it fails in ways that look convincing.

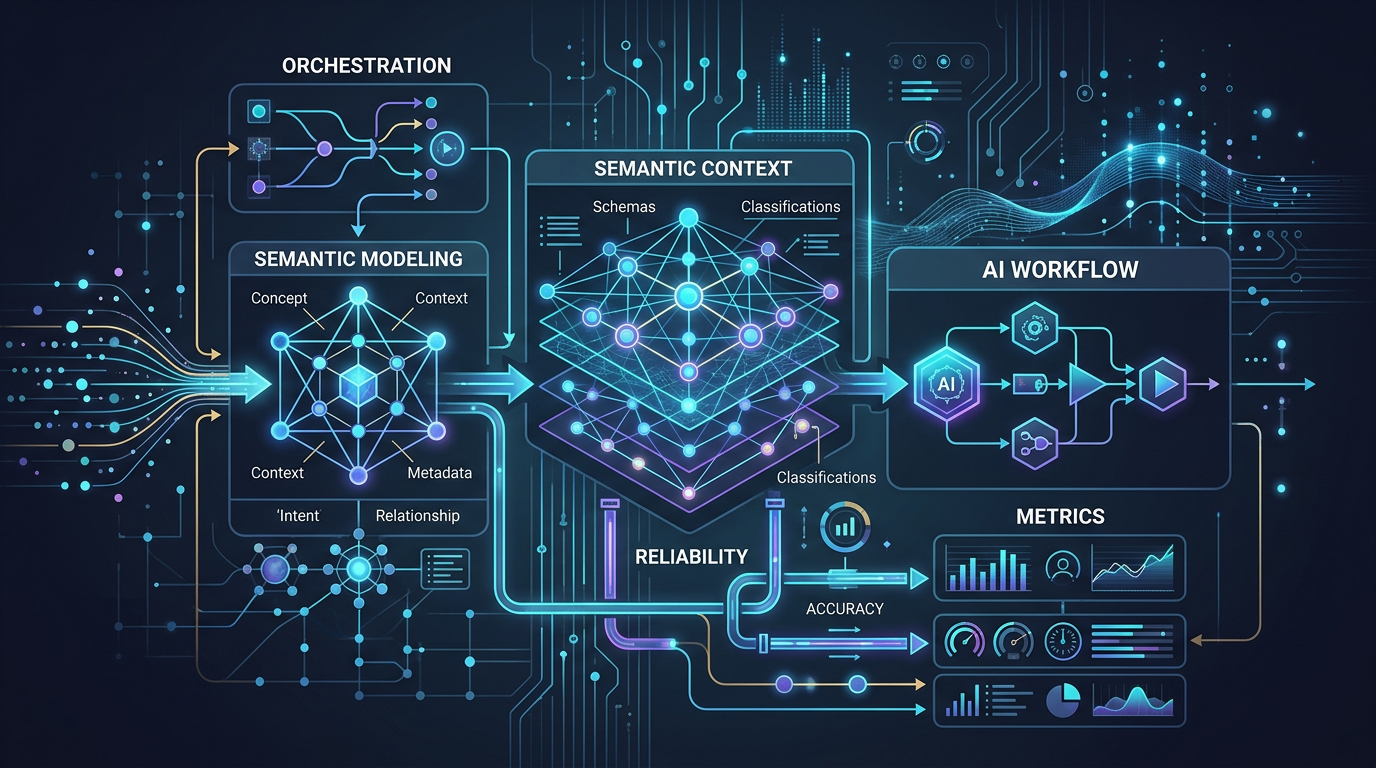

What semantic context includes in practice

In production data systems, semantic context usually combines several layers of meaning:

Business definitions

What counts as revenue, active users, conversion, churn, retention, margin, or pipeline.Model semantics

Entity definitions, dimensions, measures, joins, grains, and aggregation rules.Metric logic

How a metric is computed, filtered, and rolled up across time and dimensions.Lineage and provenance

Where data came from, which transformations produced it, and what downstream assets depend on it.Operational constraints

Which systems are read-only, which actions need review, what environments are safe, and what workflows are approved.Reference knowledge

Platform documentation, metadata, canonical SQL patterns, and reusable workflow knowledge.

This is why the strongest data agents look less like isolated chat interfaces and more like systems that connect metadata, semantic models, metrics, lineage, knowledge, and execution.

What breaks without semantic context

The most useful way to understand semantic context is to look at failure modes.

1. The agent answers the wrong question correctly

This is the classic analytics failure.

A stakeholder asks: “What was net revenue by region last quarter?”

The agent sees orders, payments, and refunds. It writes valid SQL. The query runs. The chart renders. But the answer is still wrong because the agent used booked revenue instead of recognized revenue, ignored returns, and grouped by shipping destination rather than sales territory.

Nothing broke technically. Semantics broke.

2. The agent picks the nearest table, not the right concept

Data warehouses are full of near-duplicates:

usersapp_userscustomer_profilesactive_users_dailydim_user

An agent without structured context often chooses the object with the most obvious name, not the one that matches the business concept. In a modern stack, names alone are weak signals. Semantic models and metadata exist because raw storage structures are not the same thing as trusted analytical meaning.

3. The agent joins data at the wrong grain

This one quietly ruins dashboards.

Suppose an agent joins an order-level fact table to a session-level table to answer a conversion question. The SQL executes, but the result multiplies rows and inflates counts. If the agent has no semantic model for entity relationships, join paths, and valid aggregation logic, it is guessing.

The result is not “slightly off.” It is structurally unsound.

4. The agent uses inconsistent metric definitions across tools

One team defines active customers in dbt. Another uses a BI metric. A third has a notebook-specific version with slightly different filters. The agent may retrieve all three and blend them into a single answer.

That creates a reliability problem that no prompt can solve. The agent needs a governed metric layer or other structured metric context that tells it which definition is canonical, when it applies, and how it should be queried.

5. The agent can act, but cannot reason about consequences

Execution without context is where agentic systems become risky.

Imagine an agent asked to “optimize a slow dashboard query.” Without semantic and workflow context, it might:

- rewrite SQL in a way that changes metric logic

- materialize an intermediate table in the wrong environment

- update a dashboard definition that downstream teams depend on

- bypass an approved workflow for validation

Tool access is not enough. Reliable action requires context about meaning, dependencies, and guardrails.

Why this matters more for data agents than generic assistants

A generic assistant can often get away with approximate meaning. A data agent usually cannot.

In data systems, small semantic errors compound:

- a wrong metric changes business decisions

- a wrong join corrupts downstream analysis

- a wrong lineage assumption breaks trust in impacted assets

- a wrong workflow step creates production risk

That is why context for data agents has to be structured, not just descriptive. The agent needs to reason over the same architecture that humans use to make data systems reliable.

Semantic context turns retrieval into reasoning

A lot of agent systems talk about “context” as retrieval: fetch more documents, add more schema, include more examples.

That helps, but it does not solve the deeper problem.

Retrieval gives an agent more material. Semantic context gives it a model of meaning.

That difference matters:

Retrieval says: here are five docs about revenue.

Semantic context says: here is the canonical revenue metric, its dimensions, grain, dependencies, filters, and approved interpretation.

Retrieval says: here are tables related to customers.

Semantic context says: this entity maps to customer account, joins through this key, rolls up at this grain, and should not be mixed with prospect records.

Reliable agents need both. But if you can only choose one for production correctness, semantic context carries more weight.

What a reliable context stack looks like

A production-minded agent stack usually needs four properties.

1. Structured meaning

The agent should be grounded in metadata, semantic models, metrics, reference SQL, and documentation that represent stable concepts instead of raw text fragments.

2. Relationship awareness

The agent should understand how assets connect: lineage, dependencies, entities, joins, workflow steps, and downstream impact.

3. Workflow awareness

The agent should know the difference between answering a question, generating a semantic model, creating metrics, executing SQL, and triggering an operational workflow.

4. Guarded execution

The agent should be able to move from context to execution with review points, permissions, and clear operational boundaries.

This is also where Datus’s framing is directionally right. The docs emphasize contextual data engineering, knowledge-base inputs such as metadata and semantic models, metrics-related capabilities, workflow orchestration, and MCP extensions. That architecture reflects a practical truth: reliable automation comes from structured context plus controlled execution, not from prompting alone.

Data-system examples where semantic context changes the outcome

Example: Executive KPI reporting

A CFO asks for weekly net revenue, CAC, and retention by segment.

Without semantic context, the agent may pull three metrics from three different sources, each with different inclusion logic.

With semantic context, the agent can:

- resolve canonical metric definitions

- use approved dimensions

- preserve consistent time grain

- explain where the result came from

- link the answer back to the underlying model or metric definition

That is the difference between a flashy demo and a system a finance team can trust.

Example: Debugging a pipeline regression

A data engineer asks why a dashboard dropped 18% after a model change.

Without semantic context, the agent can inspect logs and guess.

With lineage, metadata, and semantic awareness, it can reason more usefully:

- identify the upstream model change

- locate impacted metrics and dashboards

- compare reference SQL or prior logic

- surface which semantic definition changed

- propose the narrowest fix path

Example: Generating a semantic model for a new domain

A team wants to onboard subscription analytics.

Without context, an agent might generate a superficial model from table names.

With metadata, reference SQL, metrics conventions, and entity relationships, it can create a semantic model that is closer to how the business already reasons about subscriptions, invoices, MRR, churn events, and plan changes.

That reduces rework and lowers the gap between generated artifacts and production-ready ones.

A practical checklist for AI agent reliability

If you are evaluating an agent system for real data work, ask these questions:

- Does the agent have access to canonical metric definitions?

- Can it distinguish physical schema from business meaning?

- Does it understand valid joins, grains, and entity relationships?

- Can it reason over lineage and downstream impact?

- Does it use structured metadata rather than only text snippets?

- Can it explain why it chose a metric, table, or workflow path?

- Are execution steps bounded by permissions and review?

- Can humans inspect or correct the context layer over time?

If the answer to most of these is no, the system may still be useful for exploration. It is not ready for reliable production work.

The real shift: from fluent answers to grounded action

The next generation of data agents will not win because they sound better. They will win because they are grounded in the right context model.

That means moving beyond the shallow idea that context is just extra prompt text. In serious data environments, context has to carry semantics, lineage, constraints, and workflow meaning. Otherwise the agent remains a guesser with good grammar.

Semantic context is what lets an agent move from intent to reliable execution. It is what keeps “correct-looking” from becoming “quietly wrong.” And for teams building AI into real data workflows, that is the difference that matters.

Summary

If you want AI agent reliability, start here:

- prompts shape behavior, but semantics shape correctness

- retrieval adds information, but structured context adds meaning

- tool access enables action, but context determines whether action is safe and useful

- data agents need metadata, semantic models, metrics, lineage, and workflows to operate reliably

That is not extra infrastructure. It is the operating substrate for production-grade agents.

Next steps

- Read Semantic Modeling for Agentic Analytics Workflows

- Explore the semantic layer and MetricFlow-related sections in Datus Docs

Related Reading

- How MCP Changes Data Workflow Automation

- AI Data Pipeline Automation: Use Cases, Architecture, and Tradeoffs

- Agentic Data Engineering vs Traditional Data Engineering

- What Is a Data Engineering Agent? A Practical Guide with Datus

Start with Datus

- Docs: Datus Docs

- Semantic context and workflow docs: Datus Docs

- GitHub: https://github.com/Datus-ai/Datus-agent

- Main site: https://datus.ai