Why Reliable Data Agents Need More Than Good Prompts

A prompt can help a data agent start. It cannot make a data agent reliable.

That distinction matters more than most teams expect. In demos, a well-written prompt can produce an impressive answer, generate a SQL query, or outline a transformation plan. In production, the standard is different. The agent has to understand what a metric means, which tables are trusted, what has already happened in the workflow, what actions are allowed, and how to fail safely when context is incomplete.

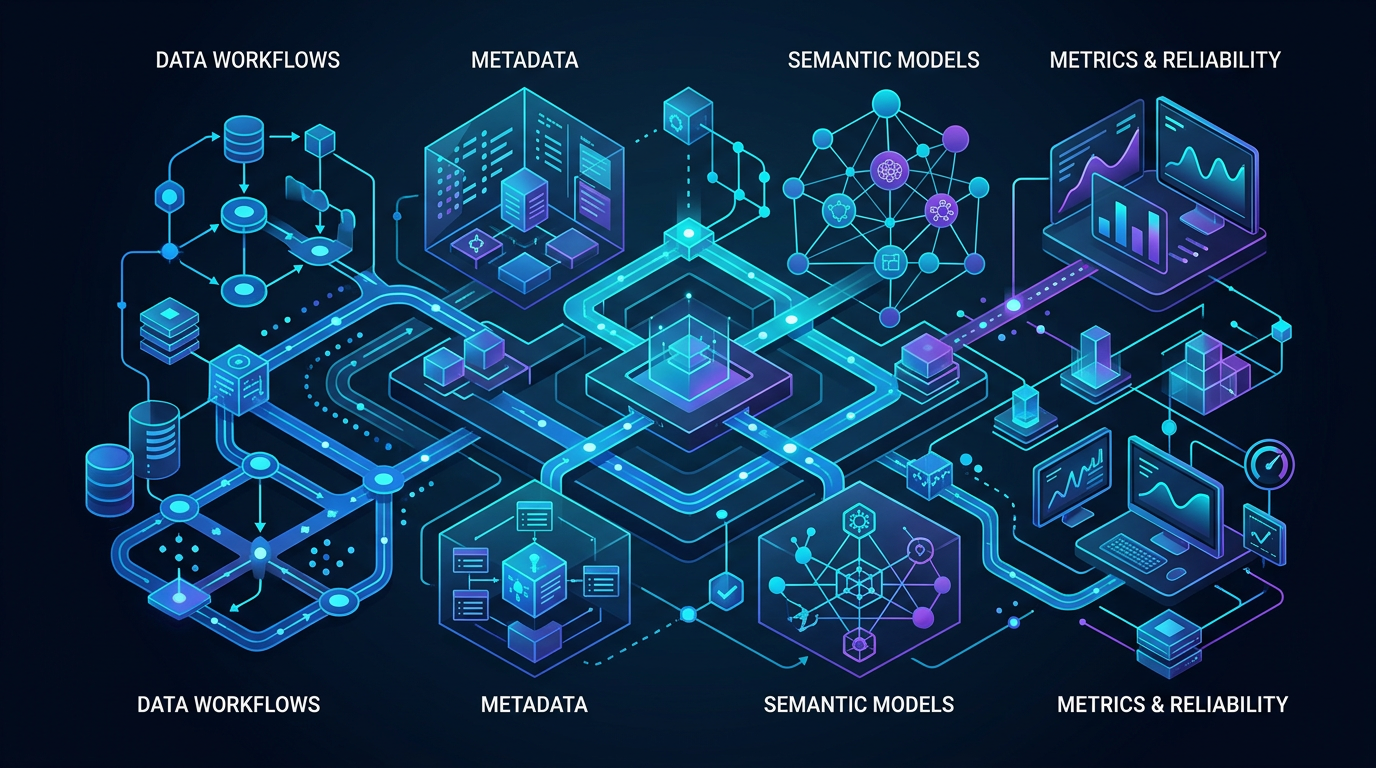

That is why reliable data agents need more than prompt engineering. They need structured context, workflow-aware execution, semantic grounding, metrics definitions, and guardrails that keep automation aligned with real data systems.

For data teams, the problem is not getting an agent to say something plausible. The problem is getting an agent to produce work that is correct enough to trust, repeatable enough to operationalize, and controlled enough to use inside production workflows.

The short version

If you want reliable data agents, prompts are only one layer.

What actually improves reliability:

- Context: schema, lineage, ownership, documentation, definitions, and history

- Workflow state: what has already run, what failed, what is waiting, and what comes next

- Metrics and semantics: shared definitions for entities, dimensions, and business logic

- Guardrails: permissions, validations, approvals, and policy boundaries

- Execution systems: tools and workflows that let the agent act on the right system in the right order

A strong prompt without these layers usually creates confident output. It does not create dependable operations.

Why prompts alone break down in data workflows

Prompting works best when the task is mostly linguistic: summarize text, rewrite content, classify a paragraph, draft an email. Data engineering and analytics workflows are different. They are grounded in systems, dependencies, definitions, and operational constraints.

A prompt can say:

Investigate why revenue dropped last week and fix the broken pipeline.

That sounds clear. But for a data agent, it leaves critical questions unanswered:

- What is the canonical definition of revenue?

- Which model or metric should be used?

- Which pipeline owns that metric?

- What changed last week?

- Which warehouse, semantic layer, or orchestration system is in scope?

- Is the agent allowed to patch production jobs or only create a remediation plan?

- What validations must pass before any change is approved?

If the agent cannot answer those questions from structured context, it will improvise. In data work, improvisation is usually where reliability starts to break.

Reliable data agents operate on context, not just instructions

The core job of a data agent is not to generate a nice answer. It is to interpret intent in the context of a real data environment.

That means the agent needs access to operational knowledge such as:

- data asset metadata

- semantic definitions

- lineage relationships

- workflow dependencies

- quality signals

- run history

- ownership and governance rules

- approved execution paths

This is the difference between prompt-following and contextual data engineering.

A prompt-following agent asks, "What did the user say?"

A context-aware agent asks, "What did the user ask, what does it mean in this system, what is the safest valid path, and what evidence supports the result?"

That second mode is what makes agentic data engineering viable.

Five layers that matter more than prompt quality

1. Structured context

Structured context gives the agent a grounded view of the environment. That includes schemas, table descriptions, lineage graphs, model ownership, quality signals, prior decisions, and documentation.

Without structured context, the agent fills gaps with pattern matching. It may choose a table because the name looks right. It may infer a join because it has seen similar patterns before. It may answer in a way that sounds reasonable while missing the system-specific truth.

With structured context, the agent can reason from what is actually known.

Example: A user asks for customer retention by segment. A prompt-only agent may query a customers table and a transactions table and produce an answer. A context-aware agent knows which retention model is governed, which segmentation logic is approved, which dimensions are stable, and whether there is already a trusted semantic definition for this analysis.

The difference is not style. It is correctness.

2. Workflow state

Many data tasks are not one-shot questions. They are steps inside longer workflows.

A reliable agent needs to know:

- whether a pipeline already failed

- whether a backfill is running

- whether a schema change is pending

- whether an upstream source is delayed

- whether a validation check already blocked deployment

- whether a human approval is required before execution continues

This is workflow state. Without it, an agent can make locally sensible decisions that are globally wrong.

Failure mode: An agent sees a stale downstream table and decides to rerun a transformation. In reality, the upstream ingestion is still incomplete, and the right action is to wait, escalate, or reroute around partial data. The prompt might be fine. The missing workflow state is the real problem.

3. Metrics and shared business definitions

Metrics are where many promising agent demos fail in production.

The agent may be able to generate SQL. That does not mean it understands the business definition behind a KPI. Reliable analytics work requires shared definitions for measures, dimensions, filters, time grains, and entities.

If "active customer," "net revenue," or "qualified pipeline" means different things across teams, prompt quality will not save the output. The agent needs a reliable reference point.

This is why metrics layers and governed definitions matter so much in agentic analytics workflows. They reduce ambiguity before generation begins.

Example: Ask three analysts to define monthly active users in a growing company and you may get three valid but inconsistent answers. A reliable data agent should not invent a fourth. It should resolve to the governed definition or surface the ambiguity explicitly.

4. Semantic models

Semantic models turn raw data structures into business-meaningful structures. They help the agent understand entities, relationships, dimensions, metrics, and reusable logic in a way that raw warehouse metadata alone cannot.

In practice, semantic modeling improves reliability because it gives the agent better defaults and clearer constraints.

Instead of seeing only:

- table names

- column types

- loose descriptions

The agent can reason over:

- canonical entities

- approved joins

- metric formulas

- dimensional hierarchies

- business-friendly naming

- reusable definitions across workflows

That matters because most data failures are not syntax failures. They are meaning failures.

5. Guardrails and execution controls

Reliable automation always needs boundaries.

In data systems, guardrails often include:

- role-based access and tool permissions

- environment separation for dev, staging, and prod

- validation checks before execution

- human review for sensitive operations

- policy rules for destructive or high-impact actions

- observability and audit trails after the action runs

A good prompt can ask the agent to "be careful." That is not a guardrail. A real guardrail is enforceable.

If an agent can modify models, trigger workflows, or change production-facing assets, those controls cannot live only in prose.

Prompting vs reliability: a practical comparison

| Layer | What prompting helps with | What prompting does not solve |

|---|---|---|

| Task framing | Clarifies user intent and expected output | Does not define system truth |

| Query generation | Helps produce SQL, plans, or summaries | Does not guarantee metric correctness |

| Reasoning style | Improves step-by-step explanation | Does not add missing lineage or semantic context |

| Tool usage hints | Suggests what action to take | Does not enforce permissions or safe execution |

| Output quality | Can improve clarity and structure | Does not create repeatability, governance, or control |

Prompting still matters. It is useful for expressing intent, shaping output, and guiding reasoning. It is just not the main source of reliability.

Common failure modes when teams over-index on prompts

Teams often discover the same pattern: the better the demo looks, the more surprising the production failure feels.

Here are the common failure modes.

The agent picks the wrong table for the right question

The prompt is fine. The warehouse has multiple similarly named tables. The agent selects a deprecated or intermediate model because it lacks lineage, ownership, or semantic guidance.

The agent generates valid SQL for an invalid metric

The query runs. The number is wrong. The issue is not syntax. The issue is that the metric definition lives in scattered tribal knowledge instead of a governed semantic layer.

The agent acts without understanding workflow timing

A job reruns too early, backfills incomplete data, or opens a noisy incident because the agent cannot see upstream status or downstream dependencies.

The agent gives a plausible answer when it should escalate uncertainty

When context is missing, many agents still try to complete the task. That creates polished but risky output. Reliability improves when the system can say, "I do not have the semantic definition required to answer this safely."

The agent has tools, but no operating boundaries

Tool access without guardrails turns a reasoning problem into a change-management problem. The failure is not that the prompt was weak. The failure is that execution was not constrained.

What reliable data agents look like in practice

Reliable data agents usually share a few design patterns.

They are grounded in a system of record

Instead of relying on prompt memory alone, they pull from structured metadata, documentation, semantic definitions, and operational signals.

They reason with workflow awareness

They understand where a task sits in the larger flow: discovery, analysis, validation, execution, monitoring, or remediation.

They separate planning from action

They can propose a plan, validate assumptions, and only then take approved actions through the right tools.

They surface evidence, not just answers

They point to the metric definition, lineage path, model source, or validation result that supports the action.

They fail safely

When context is incomplete or permissions are missing, they stop, ask, or escalate instead of inventing confidence.

A simple example: the same request, two different agents

Consider this request:

Find why weekly conversion dropped after the latest release and suggest the next step.

Prompt-only agent

The agent searches schema names, generates a few queries, compares week-over-week results, and proposes that the release changed conversion logic.

It might be persuasive. It might also be wrong.

What it may miss:

- the governed definition of conversion

- the approved event model

- a known ingestion delay after the release

- a semantic dimension change in the metrics layer

- an existing failed validation run tied to the same issue

Context-aware, workflow-aware agent

The agent starts from the governed metric definition, checks semantic models and lineage, reviews recent workflow runs, sees a failed test on event attribution after the release, identifies the affected model owner, and proposes either a safe rollback, a targeted fix, or a human review depending on permissions.

That is not just a better answer. It is a more reliable operating model.

Where semantic context fits in

Semantic context is what turns raw data access into business-aware reasoning.

For data agents, semantic context often includes:

- definitions of metrics and dimensions

- entity relationships

- approved join logic

- naming conventions that map technical assets to business meaning

- rules for time grains, filters, and aggregation

- governed interpretations of key KPIs

This is one reason Datus emphasizes structured context, semantic modeling, and workflow-aware execution. Reliable automation in data systems needs more than language fluency. It needs system fluency.

Where tools and runtime fit in

Reliable data agents also need the right execution layer.

A prompt can describe an action. A runtime determines whether the action can be carried out safely, with the right tools, in the right order, under the right controls.

That is why production-minded agent systems usually connect prompting to:

- tool access

- workflow orchestration

- approval steps

- execution logs

- observability

- governance policies

In other words, reliability comes from the full path from intent to execution, not from the text prompt at the start.

A practical framework for evaluating data agent reliability

If you are evaluating a data agent, ask these questions:

- Context: What structured context does the agent have access to?

- Semantics: Does it understand governed metrics and business definitions?

- State: Can it see workflow history, current run status, and dependencies?

- Execution: Can it use tools in a controlled and auditable way?

- Guardrails: What prevents unsafe or low-confidence actions?

- Evidence: Can it show why it reached a conclusion?

- Escalation: Does it know when to stop and ask for review?

Those questions usually tell you more than any prompt benchmark.

The real shift: from good prompting to reliable systems

The most important shift in agentic data engineering is architectural.

Teams start by asking, "How do we write better prompts?"

They eventually ask a better question:

What system does the agent need around it to produce reliable outcomes?

That is the real production question.

Reliable data agents are built, not merely prompted. They are grounded in semantic context, connected to workflow state, measured against governed definitions, and constrained by guardrails that make execution trustworthy.

Prompting still has a role. It is just not the foundation.

Next steps

If you are building data agents for real workflows, start with the reliability layers first:

- Why AI Agents Need Semantic Context to Work Reliably

- How MCP Changes Data Workflow Automation

- AI Data Pipeline Automation: Use Cases, Architecture, and Tradeoffs

- Explore the Datus docs to see how structured context, workflow-aware execution, and production guardrails fit together.

FAQ

What makes a data agent reliable?

A reliable data agent combines prompt understanding with structured context, semantic definitions, workflow awareness, controlled tool use, and guardrails. Reliability comes from the full system around the agent, not from prompt quality alone.

Why are prompts not enough for data agents?

Prompts can express intent, but they cannot supply missing metadata, lineage, metric definitions, permissions, or workflow state. In production data environments, those layers determine whether the agent is correct and safe.

What is the difference between context and a prompt?

A prompt is the instruction given to the model. Context is the structured information the agent uses to interpret that instruction accurately inside a real system. For data agents, context often includes schemas, lineage, semantic models, metrics, documentation, and run history.

Why do semantic models improve agent reliability?

Semantic models reduce ambiguity. They help agents reason over governed entities, dimensions, metrics, and joins instead of guessing from raw tables and columns. That leads to more consistent and business-aligned outputs.

What guardrails should data agents have?

Useful guardrails include permissions, environment boundaries, validation checks, approval flows, audit logs, and rules for when the agent must escalate instead of act autonomously.

Can prompt engineering still help?

Yes. Prompting helps with task framing, output format, and reasoning quality. It is valuable, but it should sit on top of semantic context, workflow state, metrics governance, and controlled execution rather than replace them.