Datus 0.2.6 Release: Equipping the Agent with a Brain

If Datus 0.2.5 and earlier versions focused on expanding the underlying toolchain and execution capabilities (such as introducing Skill and MCP mechanisms), the core of 0.2.6 lies in enhancing the Agent's autonomous planning and decision-making logic.

Building upon the previously established tool ecosystem, 0.2.6 introduces a new General Chat Agent, upgrading its workflow from single, targeted query generation to a complete pipeline of "Intent Understanding -> Task Planning -> Tool Invocation". Under this architecture, SQL generation is no longer the hardcoded endpoint of the system, but rather one of many tools the Agent can autonomously schedule based on business context.

Optimizing the Data Exploration Pipeline and Lowering Contextual Cognitive Barriers

In practical data analysis scenarios, the core pain point often lies not in writing SQL syntax, but in sorting out the prerequisite context - such as clarifying unknown table structures, verifying complex metric definitions, or retrieving historical query records. The 0.2.6 release specifically addresses this by refining the Agent's upfront data exploration and context confirmation mechanisms, systematically eliminating these prerequisite barriers to analysis.

Two Core Upgrades

- General Understanding -- Autonomous Scheduling

- No longer limited to "only outputting SQL".

- Upon receiving a task, it autonomously breaks it down and schedules the appropriate tools: whether it's inspecting the Schema, searching the knowledge base, reading/writing files, delegating SQL tasks, actively accumulating knowledge, or generating analysis reports, everything is executed in an orderly manner.

- Planning -- Think Before Acting

- Press

Shift+Tabto activate planning mode.

- Before touching the database, it generates a step-by-step execution plan.

- Execution proceeds only after manual review and confirmation, providing safety guarantees for cost-sensitive and regulated environments.

- Press

Core Features

Agent Generalization -- Breaking the SQL Boundary

Architecture

User prompt

│

▼

ChatAgenticNode

├─ DBFuncTool list_tables, describe_table, read_query, …

├─ ContextSearchTools search_metrics, search_reference_sql, search_knowledge,

│ list_subject_tree, get_metrics, get_reference_sql, …

├─ DateParsingTools get_current_date, parse_temporal_expressions

├─ FilesystemFuncTool read_file, write_file, list_directory, search_files, …

├─ PlatformDocSearchTool list_document_nav, get_document, search_document, …

├─ SkillFuncTool skill_list, skill_execute_command, user-defined skills

└─ SubAgentTaskTool task(type, prompt, description)

│

├─ gen_sql → GenSQLAgenticNode

├─ gen_report → GenReportAgenticNode

├─ gen_semantic_model → GenSemanticModelAgenticNode

├─ gen_metrics → GenMetricsAgenticNode

├─ gen_sql_summary → GenSQLSummaryAgenticNode

├─ gen_ext_knowledge → GenExtKnowledgeAgenticNode

└─ <custom subagent> → configured in agent.ymlNew Workflow and Scenario Comparison

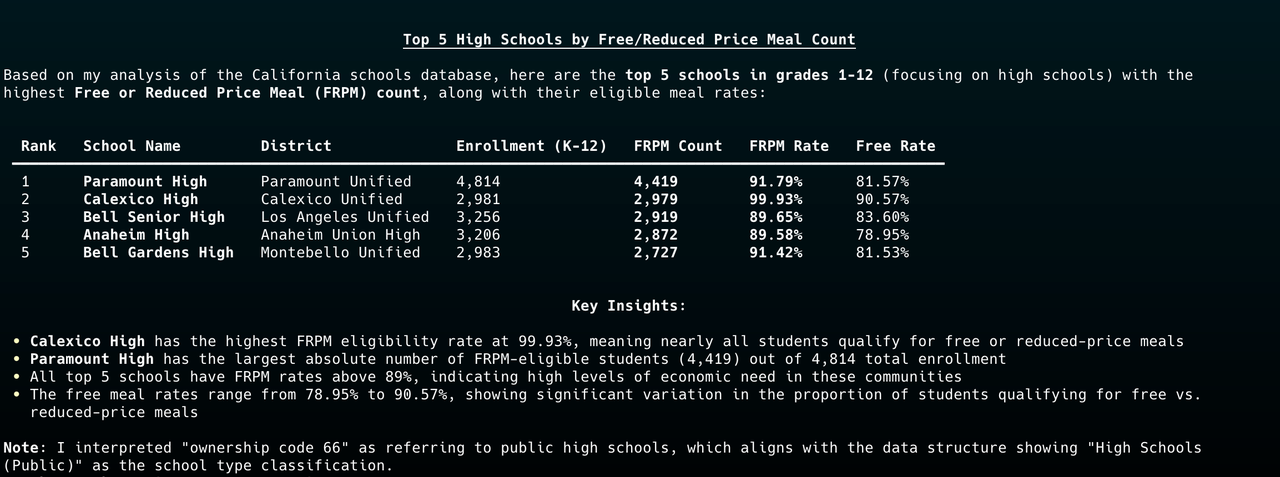

The Agent's current operational logic is: Collect Background -> Reason & Plan -> Execute/Delegate -> Verify in Real Environment -> Output Results.

| Scenario: Identify underprivileged schools in California with outstanding performance | Before (Version 0.2.5) | Now (Version 0.2.6) |

| Understanding Approach | Requires precise instructions (e.g., “write a SQL query to find…”) | Understands abstract questions (e.g., “Which schools in California have broken the norm?”) |

| Execution Process | Directly generates a SQL query; task ends. | Analyzes table schema → extracts the business definition of “underprivileged” → matches historical SQL from the knowledge base → constructs prompts → delegates SQL generation and validation. |

| Final Output | Outputs only SQL code. | Outputs natural language analysis + validated SQL + query results + data quality insights (e.g., explanations for missing values). |

Explore Task Tool -- Explore First, Analyze Later

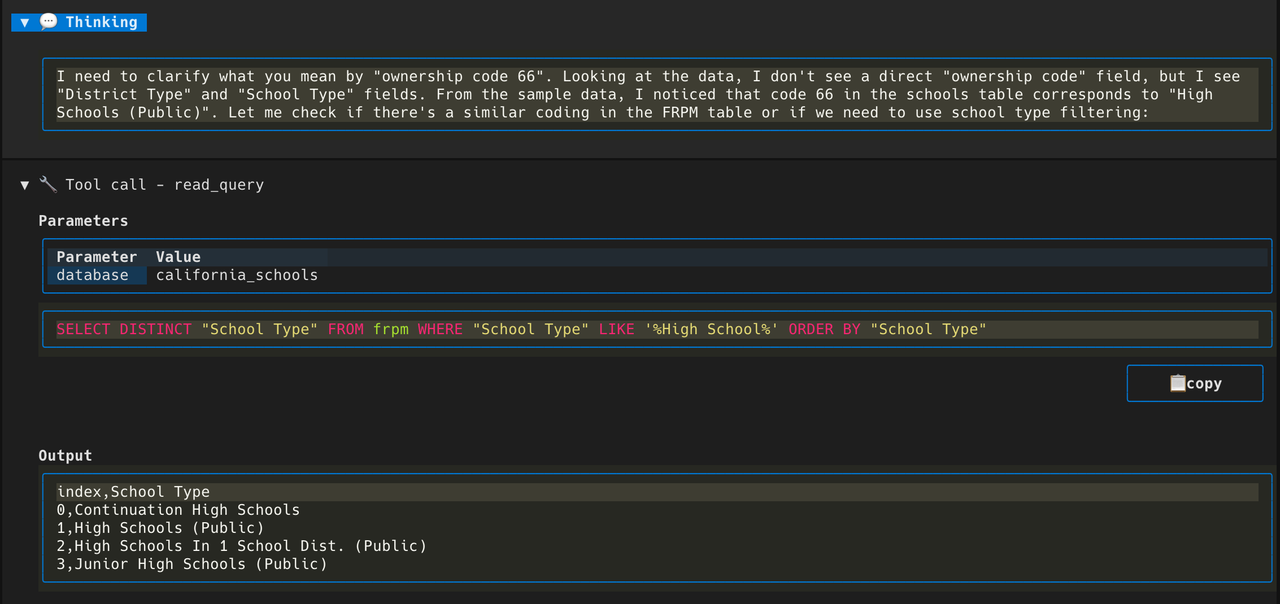

The newly added Explore Task Tool provides a natural language-driven database exploration mechanism. Its core lies in endowing the Agent with the ability to proactively retrieve and "observe" the actual content of the database.

This tool primarily addresses two pain points in actual interaction: first, lowering the cognitive barrier for users when facing unfamiliar databases; second, effectively avoiding blind guessing (hallucinations) by the Agent when lacking underlying data context.

By introducing this mechanism, the Agent's execution pipeline becomes more rigorous and complete. Its workflow shifts to "Preliminary Exploration -> Understand Data Structure -> Generate Query Based on Real Data". From a technical evolution perspective, this capability is also a further extension and practical application of the semantic tool optimization and knowledge enhancement features from version 0.2.5.

Plan Mode Optimization -- Long-Range Tasks, Fully Controlled

In version 0.2.5, the system built the initial prototype of Plan Mode by having the Chat node inherit from the GenSQL agentic node. This 0.2.6 release further optimizes Plan Mode. Architecturally, this mode references the design philosophy of Claude Code, and the next version will support Plan persistence.

This mechanism is primarily used to optimize the execution controllability of complex tasks, and its core workflow includes the following features:

- Upfront Planning and Confirmation: Before calling underlying tools to perform actual operations, the Agent will pre-generate a structured execution plan and submit it to the user to confirm the specific execution method.

- Dynamic Progress Visualization: During task execution, the system dynamically displays the current progress and active node in real-time, significantly reducing the tracking cost of long-range tasks.

- Step-by-Step Feedback and Correction: The execution plan has dynamic adjustment capabilities. At each step of the workflow, the system supports receiving feedback, allowing users to intervene at any time and modify the subsequent plan pipeline.

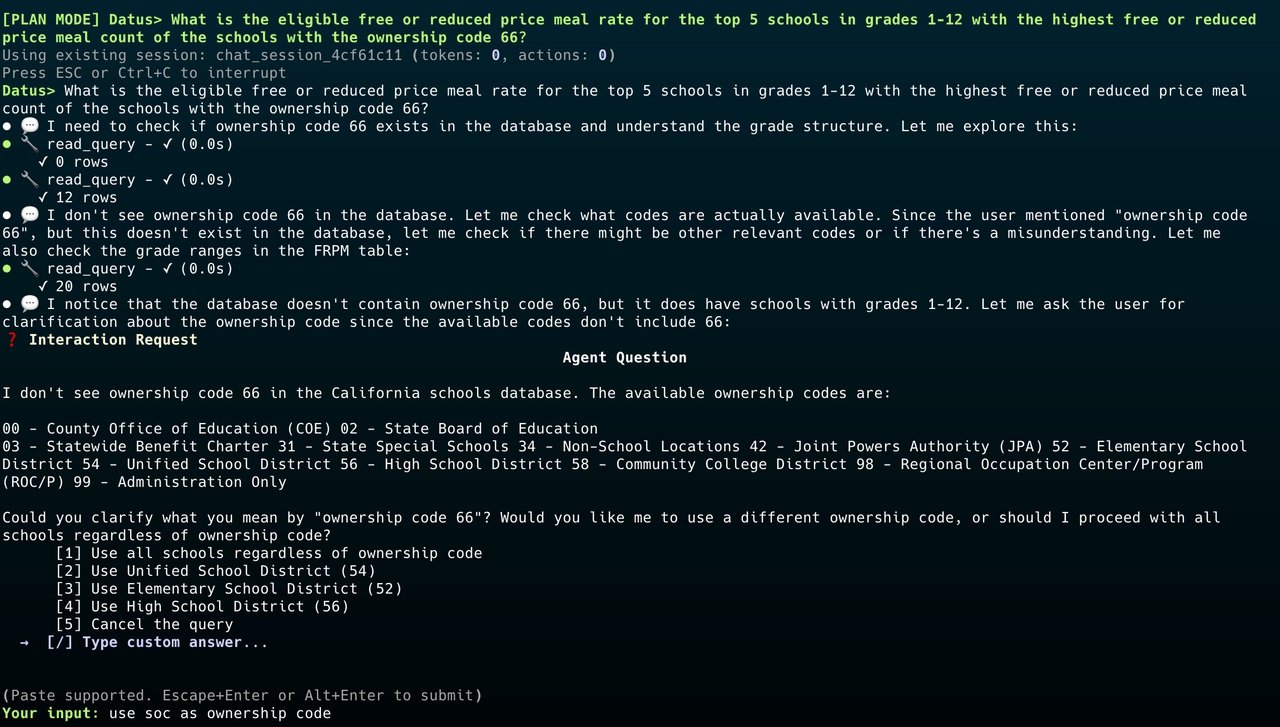

AskUser Tool -- Proactively Ask, Don't Guess

In the existing execution logic of 0.2.5, the Agent tended to directly infer user intent based on implicit assumptions when facing ambiguous inputs; due to the lack of interruption and confirmation mechanisms throughout the execution pipeline, this unidirectional generation mode significantly increased the probability of erroneous queries and analysis results.

The AskUser Tool introduced in 0.2.6 effectively solves this problem. This tool allows the Large Language Model (LLM) to proactively suspend the current task and initiate a question to the user when it identifies missing context or unclear intent, rather than arbitrarily filling in information. By transforming the workflow from "unidirectional guessing" to "on-demand confirmation", this mechanism further reduces the risk of AI hallucination from the content generation side. This complements the strengthened parameter validation (String Validation) in 0.2.5, jointly improving the reliability of system outputs.

Storage Pluginization -- Flexible and Extensible

With the expansion of Datus, the original storage architecture exposed two structural issues hindering platform development, which directly impacted enterprise-grade self-hosted deployments (such as using PostgreSQL, pgvector, or Milvus) and the construction of the plugin ecosystem:

- Deep Coupling of Vector Storage (LanceDB Lock-in): In the original architecture, various modules (such as feedback, metrics, Schema metadata, etc.) directly imported and constructed LanceDB objects. Switching or extending the vector backend meant modifying dozens of scattered files.

- Lack of Abstraction in Relational Storage: Native SQLite3 calls were scattered across modules like task queues, subject trees, and session storage. Due to the lack of a unified abstraction interface, the system could not smoothly migrate to hosted databases, nor could it establish consistent error-handling boundaries.

Core Change: From Hardcoding to Backend-Agnostic Abstraction

This commit completely decouples Datus's storage system from any specific database implementation:

- Introduction of a Unified Abstraction Layer: All internal storage (session history, task queues, reference SQL, etc.) is no longer hardcoded and bound to LanceDB and SQLite, but is entirely placed behind clear, backend-agnostic abstraction interfaces.

- Dynamic Pluggability: Specific underlying database implementations have been transitioned to a pluggable mode. The system can dynamically discover and load appropriate storage backends at runtime, requiring zero modifications to application-layer code.

This refactoring completely clears the underlying obstacles for Datus to move towards more flexible enterprise-grade deployments.

Ecosystem Expansion & Engineering Improvements

Database Ecosystem Expansion

In terms of architectural design, the four newly added Adapters continue Datus's standard specifications. Like the existing MySQL, PostgreSQL, and StarRocks adapters, they are uniformly built on the shared datus-sqlalchemy base layer, ensuring the stability and consistency of the underlying logic:

datus-sqlalchemy (base)

├── datus-mysql

├── datus-postgresql

├── datus-starrocks

├── datus-hive ← new

├── datus-spark ← new

├── datus-clickhouse ← new

└── datus-trino ← newThe addition of these four Adapters expands Datus's support within the big data ecosystem. Previously, Datus primarily focused on OLTP databases (such as MySQL, PostgreSQL) and certain cloud data warehouses (such as Snowflake, ClickZetta). With this update, Datus officially covers the Hadoop, Spark, and modern Lakehouse technology stacks, enabling direct integration with the infrastructure relied upon by most medium-to-large enterprise core data platforms.

Continuous Refinement of Developer Experience

CLI styling optimizations; use Ctrl+O to toggle summary/details, aligning with the usage habits of Claude Code.

-subagent Parameter to Directly Open the Specified Agent Web Interface

In version 0.2.5, the system introduced Dashboard Copilot, which supports automatically extracting knowledge from Superset dashboards and generating corresponding Sub-Agents (see official documentation).

Building on this foundation, this update introduces the --subagent startup parameter at the entry level. Through this parameter, users can directly pull up and enter the Web chat page of a specified Sub-Agent upon startup. This improvement effectively shortens the interaction pipeline, eliminating redundant navigation steps, and enabling users to achieve "one-click direct access" to specific report contexts for rapid, in-depth, and targeted analysis of target dashboard data using natural language.

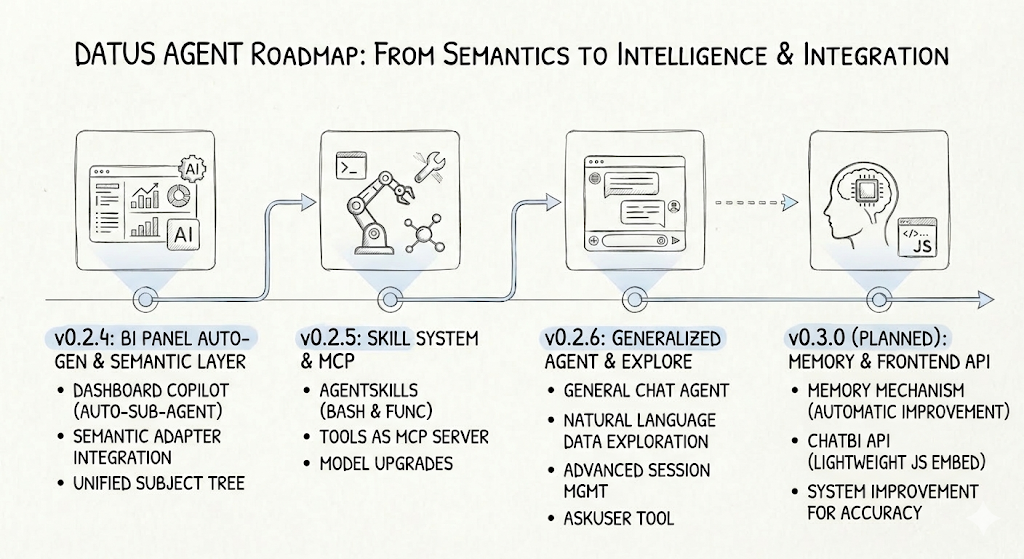

Datus Agent Roadmap: From Semantic Enhancement to Intelligent Integration

The core evolution of Datus Agent from 0.2.4 to the planned 0.3.0. Recent iterations have mainly centered around underlying architecture refactoring, Agent capability expansion, and external ecosystem integration. The goal is to gradually transform it from a single data query assistant into a more extensible intelligent data hub.

- 0.2.4 Semantic Layer Refactoring and Automation: The focus was on perfecting the underlying understanding of data. We refactored the unified Subject Tree and introduced the pluggable Semantic Adapter, allowing the system to more accurately dock with external metric layers. Concurrently, the addition of Dashboard Copilot preliminarily realized the automatic generation of Sub-Agents and semantic models from BI dashboard configurations.

- 0.2.5 Introduction of Skill System and MCP Ecosystem Integration: The core was to empower the Agent with action capabilities and break system boundaries. We fully launched the AgentSkills system (supporting bash and function extensions) and exposed the Datus tool library as an MCP Server, achieving seamless docking with compatible clients like Claude Desktop.

- 0.2.6 Generalization and Deep Interactive Exploration: This version significantly improves generalization capabilities and interactive experiences in non-SQL scenarios. The General Chat Agent, combined with the newly added Explore Task Tool, supports context-isolated data exploration. The underlying layer utilizes SQLite to implement advanced session management (supporting state controls like Resume/Rewind/Interrupt) and adds the AskUser Tool mechanism to handle model uncertainty.

Looking Ahead (0.3.0): Memory Mechanisms and Developer Integration

In the upcoming 0.3.0, development focus will shift towards interaction continuity and developer friendliness:

- Memory (Long-Term Memory): Plans to introduce system-level memory functionality. The Agent will be able to automatically accumulate context based on historical conversations, thereby continuously improving behavior patterns and response accuracy in subsequent interactions.

- ChatBI API (Lightweight Embedding): To lower the integration threshold on the business side, we will provide an extremely lightweight JS access solution. Developers can seamlessly embed Datus's powerful dialogue and analytical capabilities into their own frontend applications with a single line of code.

- Plan Agent (Long-Lifecycle Tasks): Introducing a Plan Persistence mechanism to break the constraints of the current session lifecycle, enabling the Agent to maintain the continuity of task states over longer time spans, and supporting cross-session plan recovery and progress tracking, thereby handling more massive data engineering workflows.

Datus is moving towards becoming a true Data Engineer Agent, rather than just a smart SQL completion tool.

Release note: 0.2.6